Accelerate Your GitHub Actions with Namespace

Transform your CI/CD pipeline with high-performance runners. Namespace offers unique capabilities for Linux, Windows and macOS to speed up your GitHub workflows.

Why Namespace Runners?

Getting Started

To speed up your workflows with Namespace, you need to connect Namespace with your GitHub organization and do a one-line change to your workflow definition:

Connect your GitHub organization to Namespace

Update your workflows

Change the runs-on field in a workflow file to a Namespace profile or label.

jobs:build:- runs-on: ubuntu-latest+ runs-on: namespace-profile-defaultsteps:- name: Checkoutuses: actions/checkout@v4...

Done!

Your GitHub workflow will now run on Namespace!

Configure your Runners

Namespace runners offer a high degree of customization and unique capabilities. Start off by selecting your target OS / architecture and picking an optimal shape. Subsequent guides will explore how to benefit from each of the available solutions.

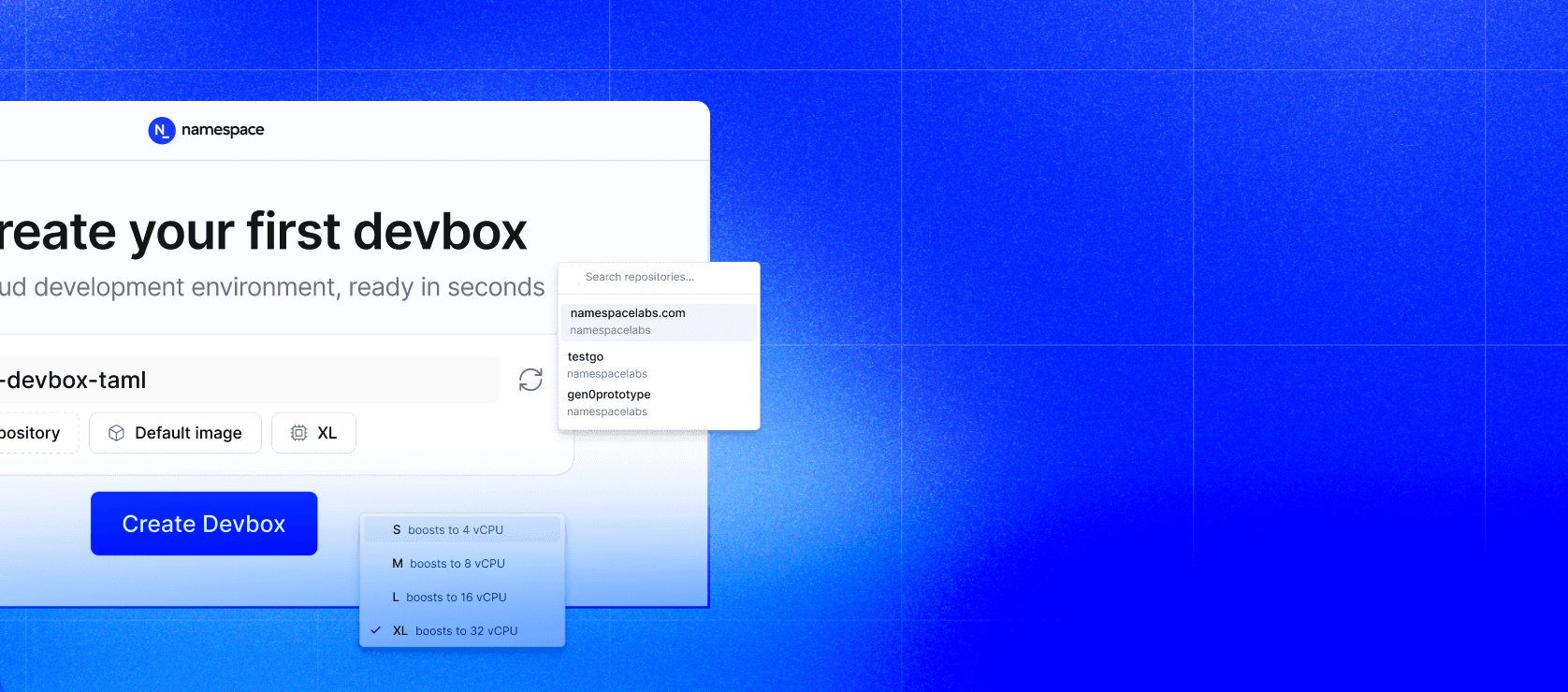

Create a Profile

- Open the Dashboard and press "New Profile".

- Specify a name. The name will be part of the

runs-onlabel in your workflow file. - Select if you want to run on Linux AMD64, Linux ARM64, Windows or macOS.

- Pick a resource shape. You can change the shape later to find the best fit.

- Confirm by pressing "Create Profile".

Select the Profile

Update the runs-on label in your workflow file to select the runner profile:

jobs:myjob:runs-on: namespace-profile-big-apple

Namespace Runners are powered by Namespace Compute which uses best-in-class hardware for every platform:

- Linux AMD64 runs on high-performance AMD EPYC CPUs.

- Linux ARM64 supported by AmpereOne (high memory configurations), and Apple M4 Pro and M5 Max.

- Windows AMD64 runs on high-performance AMD EPYC CPUs.

- macOS ARM64 Apple M5 Max (or M4 Pro on some configurations).

Selecting an optimal resource shape can optimize your performance and cost. Larger shapes may be faster if your workflows can make use of the additional resources. Smaller shapes may be cheaper if your runner is oversized. We recommend observing a few runs first before adjusting the resource shape.

Next Steps: Optimize Your Workflows

Now that you have Namespace Runners configured, enhance your CI/CD pipeline with these advanced features:

Caching Solutions → Learn how to implement cross-invocation caching for dependencies, tools, and build artifacts to dramatically reduce workflow execution time.

High-Performance Docker Builds → Discover how to leverage Remote Builders and local caching strategies for optimal Docker build performance.

Custom Base Images → Speed up workflows by pre-installing your dependencies and tools in custom runner images.

Debugging Made Simple → Access advanced debugging tools and techniques that make troubleshooting GitHub Actions workflows effortless.

Optimizing Usage → Use the insights dashboard to monitor workflow performance, track resource usage, and identify opportunities to reduce costs and improve performance.

Advanced Configuration → Specific configuration options for privileged workflows, container jobs, advanced caching, and more.

Enterprise Support

Need custom machine shapes, dedicated capacity, or specialized support? Our support team can unlock runners with up to 512GB RAM and provide dedicated consultation for complex CI/CD requirements.