Cache Volumes: zero-latency caching, docker image caching, pre-install dependencies

One of our main goals at Namespace is to improve build and test performance, with minimal to no changes to your toolset.

Today, we're announcing a set of upgrades that improve incremental run performance for your GitHub Actions:

- Zero-latency caching: incremental build and test result caching across invocations, backed by high-performance local storage.

- Docker image caching: skip image pulls and unpacks when using the same containers in different runs (e.g. test dependencies).

- Pre-install dependencies: skip installation time on larger dependencies, like browsers used by Cypress or Playwright.

- Faster git checkouts for large monorepos.

To simplify managing this added flexibility, we're also introducing Runner Profiles.

(If you get to the end of the post, you'll also get a teaser on what's coming next).

Zero-latency Caching

You're likely using actions/cache today, either directly or indirectly via

your language-specific action (e.g. actions/setup-go).

Language-specific caching already brings in a noticeable speed-up.

But building and testing locally is often even faster and naturally incremental; without any particular configuration.

Why is that?

The answer is simple: local development relies on each invocation hitting the same filesystem cache. Many of the packages and intermediate build results are already present in your local filesystem, when you issue a new build.

The level of re-use is high.

actions/cache attempts to approximate this experience, but it adds a

non-trivial cost: the cache has to be downloaded as part of the run, and any

changes are then also uploaded to a shared block storage. And because your

caches grow, you spend more and more time download and uploading caches.

It's not uncommon to see users waiting multiple minutes for their actual builds or tests to start, because they're waiting for the cache to download.

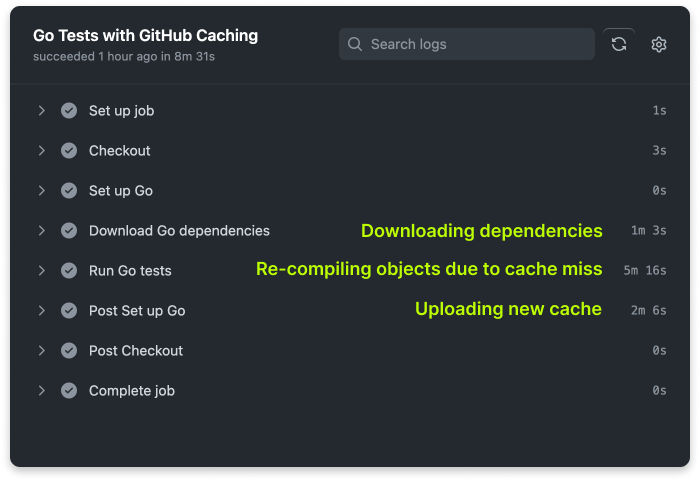

For example, when we change a Go dependency in one of our repositories:

2 minutes of added run time before we get a green tick, yikes. Not to mention the cache miss.

Introducing Cache Volumes

We're flipping this problem on its head with Cache Volumes.

We call it a zero-latency cache, as you virtually don't wait any time.

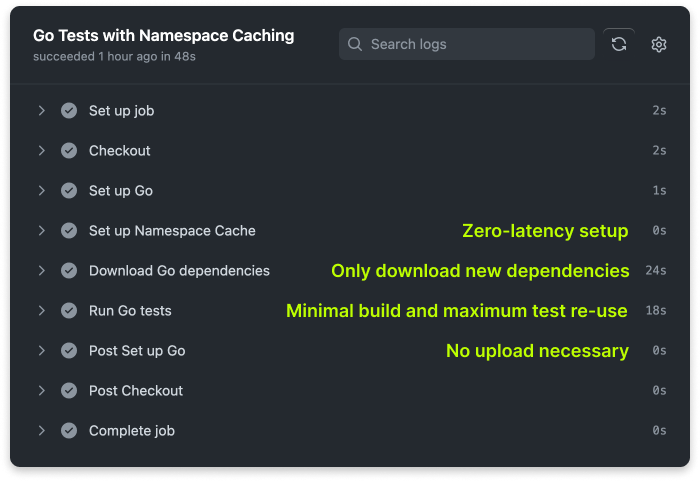

Here's the same change on Cache Volumes:

Full cache re-use from a previous run with zero latency, any additional downloads are incremental, exactly how it behaves in your own workstation.

We've been working on Cache Volumes for many months, and many of our existing customers have already been using them -- and although we still have much to do and tune, today we declare them Generally Available.

A big thank you to all of our early testers for the early support and feedback.

How does it work?

When configuring Namespace caching, each run now gets a unique additional disk (a "cache volume") attached to it; and with some Namespace magic we guarantee that provisioning this additional disk with your cache inside takes virtually zero time (more on that on a future blog post).

Cache volumes were designed with continuous integration in mind; that means supporting scaling-out with concurrent runs, but without compromising on performance.

We achieve it by getting each run its own clone of the cache, backed by NVMe storage; so you get the best performance in your builds and tests.

Getting started

In typical Namespace spirit, using Cache Volumes is trivial. Create a new profile with caching enabled:

And refer to it in a GitHub actions workflow.

jobs:build:- runs-on: ubuntu-latest+ runs-on: namespace-profile-speed-launch

Then, you either map a set of meaningful directories to be part of the cache, or use

our

namespacelabs/nscloud-cache-action

action to do it for you.

Here are a few examples:

- Go: github.com/namespace-integration-demos/go-cache

- Javascript/Yarn: github.com/namespace-integration-demos/cache-yarn-workspaces

- Nix: github.com/namespace-integration-demos/nix-cache-example

- Rust: github.com/namespace-integration-demos/rust-cache

Check out our documentation to learn more.

If there's a file, we can cache it. Although we provide tools to pre-configure certain languages or frameworks, you can set up any caching you want.

Caching Docker images

If you use Docker or Docker Compose to start dependencies you use in tests, you know how much time you spend just downloading container images and unpacking them.

If you were building new images, we are already helping you with Remote Builds. But if you're just starting existing dependencies, it can be minutes before they're up and running and your tests can start.

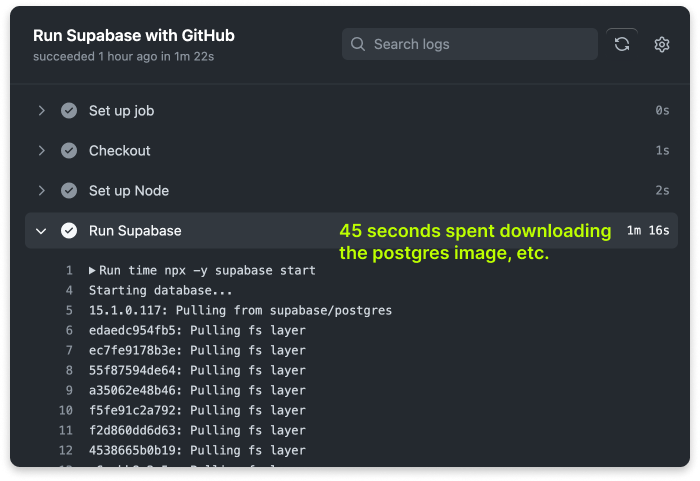

Here's an example. When running Supabase in development or as a test backend, it runs a set of containers, including Postgres, and others.

When developing, subsequent runs in your workstation during development are fast, as those images are then already present locally and ready to be used.

But that's not the case in CI: they're downloaded over and over.

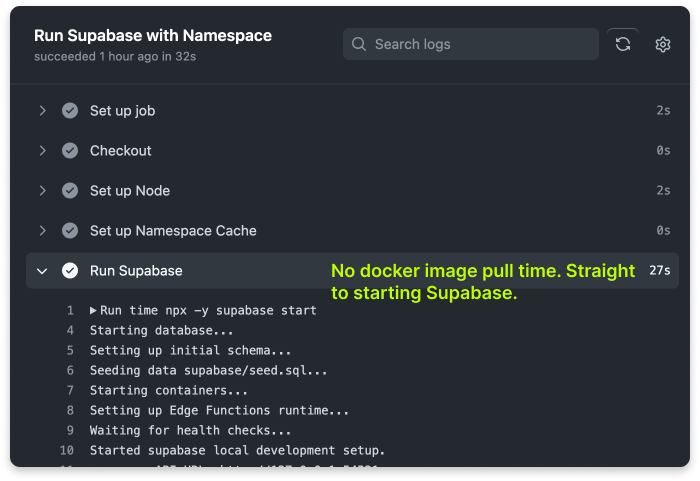

With Namespace caching, you can now also benefit from the same cross-invocation Docker image caching. Images that you re-use are then available in subsequent runs, without any manual management on your side.

Oh, and you don't need to worry with runaway cache sizes, as we'll clean up stale images for you (without affecting your test run time).

Enabling Docker image caching is as simple as a single click, just flip the switch under Caching:

Check out our documentation to learn more.

Preview: Pre-installing Dependencies

If you use Playwright, Cypress or any framework that relies on installing new software (e.g. browsers), that is another source of time spent doing repeated work that is not running your build and tests.

With Namespace, you can now pre-install dependencies into the environment that runs your tests. Rather than spending the installation time during the test run, we do the installation once and re-use the base environment so that it doesn't hurt reproducibility.

We're starting with you being able to specify a set of existing Ubuntu packages to include in your base image.

This feature is in Preview while we fine-tune error handling and the overall user experience. Over the next weeks, we expect to add:

- Ability to specify additional packages using the Nix ecosystem;

- And the ability to customize the whole base image using a Dockerfile as the source of truth.

Check out an example: github.com/namespace-integration-demos/custom-runner-images.

Check out our documentation to learn more.

Faster Monorepo checkouts

Spending more and more time checking out your monorepo, or larger repositories?

We're also introducing a solution for you:

namespacelabs/nscloud-checkout-action;

a monorepo-focused version of GitHub's checkout action, which uses Cache

Volumes to accelerate subsequent checkouts.

We've heard reports of larger repositories saving up to minute in their time to checkout.

Check out our documentation to learn more.

Introducing Runner Profiles

You got a glimpse of Profiles above already, they're our take at managing reusable environment configurations for your test runs in GitHub Actions.

We'll be building more on Profiles over the next months, including:

-

Observability: Understand the usage of each profile over time so you can optimize your resource usage.

-

Configurable policies: Apply different usage policies per GitHub organization or repository.

-

Cost enforcement: Create spend limits per profile.

Check our documentation to start and use Runner Profiles.

Coming soon: Apple Silicon

We hear from many of you that you'd like to be able to build and test not just in Linux, but also macOS and Windows.

We hear you. We're starting with macOS (on Apple Silicon), as that's the most complex challenge.

We're bringing macOS support with the same Namespace approach, so you can expect a feature set similar to the one we already offer for Linux, including custom base images.

We're now starting to be ready to take in some early customers. If you'd like to be part of the early access, drop us a note at support@namespace.so.

Coming soon: SOC 2 certification

Namespace is a security-centric company. We're extremely mindful of managing data and execution on your behalf, and we fully embrace a zero-trust approach to infrastructure management.

But we want to ensure that our customers understand our commitment to security, so a few months ago we started working towards our SOC 2 certification.

Getting audited is a team priority, and you can expect news early next year.

Wrapping up

Over here at Namespace, we are committed to our customers; and to continuously bring you developer workflow improvements, whether performance-related, understandability, or more.

Although we sell compute, we don't let that in the way of getting you better options to reduce your run-time over time.

We believe that if you go faster, we'll be better too.