Slow CI is a developer productivity killer. Waiting 10–20 minutes for a pull request to go green interrupts your flow, delays reviews, and adds up to hours of wasted time across a team every day. GitHub's default runners are often a common bottleneck in CI workflows.

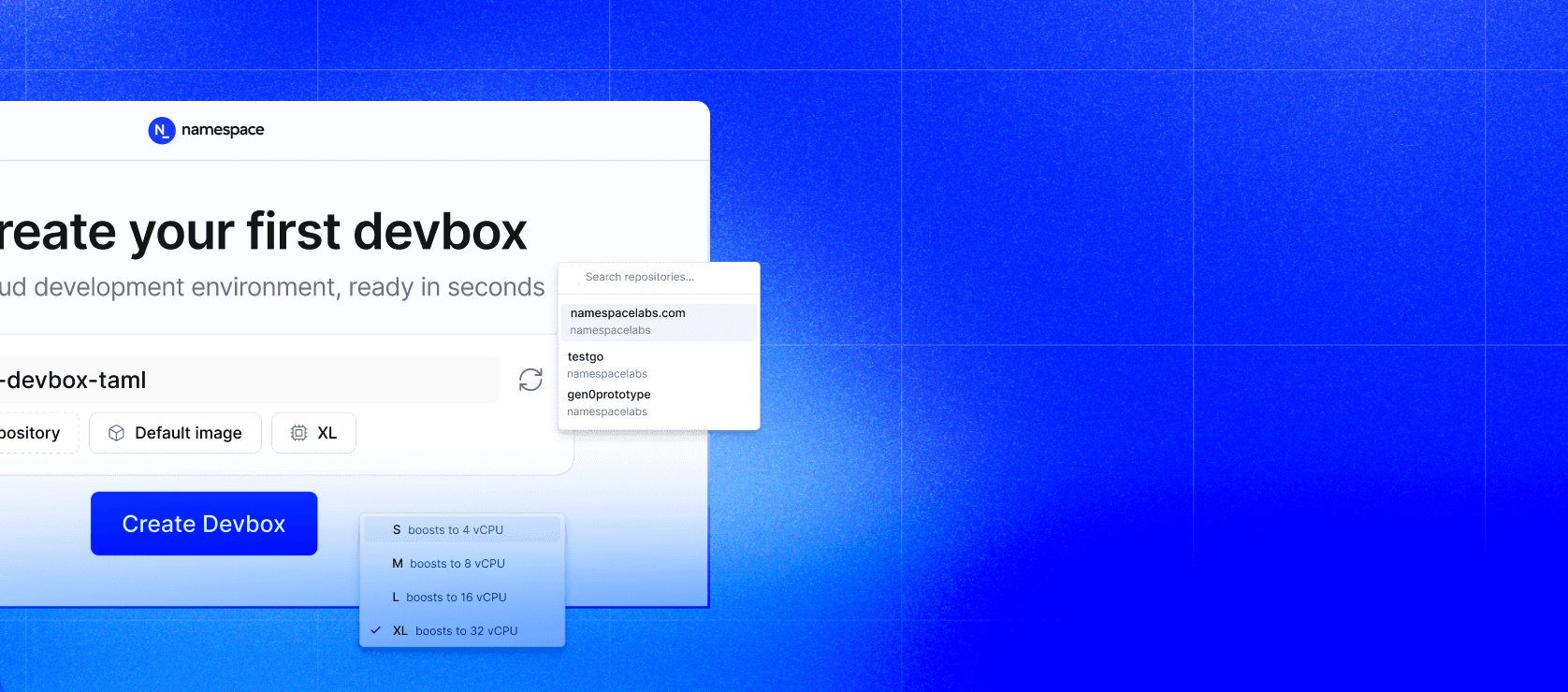

The good news: you don't need to rewrite your GitHub Actions pipelines to fix this. Namespace is a managed infrastructure platform that plugs directly into your existing workflows. Getting meaningfully faster builds is often just a label change and two new actions away. This post covers the three changes that move the needle most: faster runners, cached git checkouts, and persistent cache volumes.

1. Namespace Runners: Better Hardware, One-Line Change

The single highest-leverage change in most CI setups is running on faster hardware. Namespace's default runner shape is 8 vCPUs and 16 GB of RAM. 4x the CPU and 2x the memory of a standard GitHub-hosted runner. Runners are powered by AMD EPYC processors (x64) or Apple M4 Pro and M5 Max (macOS/ARM), which consistently rank among the fastest available in third-party benchmarks.

Setup

- Connect Namespace to your GitHub organization via the Namespace dashboard.

- Install the Namespace GitHub App and grant it access to your repositories.

- Create a runner Profile (e.g.,

my-fast-runner) and pick a machine shape. - Update the

runs-onfield in your workflow.

Before (standard GitHub runner)

jobs:

test:

runs-on: ubuntu-latest # 2 vCPU, 7 GB RAM

steps:

- uses: actions/checkout@v4

- uses: actions/setup-go@v6

with:

go-version: "1.22"

- run: go test ./...After (Namespace runner)

jobs:

test:

runs-on: namespace-profile-my-fast-runner # 8 vCPU, 16 GB RAM

steps:

- uses: actions/checkout@v4

- uses: actions/setup-go@v6

with:

go-version: "1.22"

- run: go test ./...The namespace-profile-<name> label maps directly to the profile you created in the dashboard. You can also use raw runner labels if you prefer not to manage profiles:

# Directly select a shape using runner labels

runs-on:

- nscloud-ubuntu-22.04-amd64-8x16Picking the Right Shape

Not all jobs need the same resources. Namespace lets you right-size per job:

jobs:

lint:

# Lightweight small runner is fine

runs-on: nscloud-ubuntu-22.04-amd64-2x4

build:

# CPU-intensive compilation go bigger

runs-on: nscloud-ubuntu-22.04-amd64-16x32

e2e-tests:

# Memory-hungry test suite

runs-on: nscloud-ubuntu-22.04-amd64-8x32Tip: Use the runforesight/workflow-telemetry-action to profile CPU and memory utilization before choosing a shape. If either metric is consistently at 100%, you're leaving performance on the table.

2. Namespace Git Checkout: Skip the Clone, Use a Mirror

actions/checkout clones your repository from scratch on every run. For large repos, like monorepos with years of history, repos with heavy LFS usage, or anything with many submodules, this can easily eat 1–3 minutes per job. Namespace solves this with nscloud-checkout-action, which maintains a persistent git mirror on a Cache Volume attached to your runner.

On the first run, a full clone is performed and stored in the volume. Every subsequent run fetches only the delta since the last checkout. For most repos, this brings git checkout time from minutes down to seconds.

Prerequisites

Enable Git repository checkouts caching in your runner profile configuration in the Namespace dashboard. This attaches the cache volume that stores the git mirrors.

Before (standard checkout)

jobs:

test:

runs-on: namespace-profile-my-fast-runner

steps:

- uses: actions/checkout@v4 # Full clone every time

- run: go test ./...After (Namespace cached checkout)

jobs:

test:

runs-on: namespace-profile-my-fast-runner

steps:

- uses: namespacelabs/nscloud-checkout-action@v8 # Uses cached mirror

with:

path: my-repo # Optional: checkout into subdirectory

- run: cd my-repo && go test ./...Checking Out Multiple Repositories

A common pattern in monorepos or multi-service architectures is checking out several repos in one job. nscloud-checkout-action handles this cleanly, each repo gets its own mirror entry in the cache volume:

jobs:

integration-test:

runs-on: namespace-profile-my-fast-runner

steps:

- name: Checkout main service

uses: namespacelabs/nscloud-checkout-action@v8

with:

path: main-service

- name: Checkout shared library

uses: namespacelabs/nscloud-checkout-action@v8

with:

repository: my-org/shared-lib

path: shared-lib

- name: Run integration tests

run: |

cd main-service

go test ./integration/...Submodules

Submodule updates are also accelerated through the same mirror mechanism:

steps:

- uses: namespacelabs/nscloud-checkout-action@v8

with:

submodules: recursive

path: my-repo3. Namespace Cache Volumes: Persistent, High-Speed Caching

GitHub's built-in actions/cache works by uploading and downloading tarballs to remote storage at the start and end of every job. For large dependency trees, like a Node.js project with thousands of packages, a Go module cache, and a Rust build cache, these transfers can take 2–5 minutes per job, and the 10 GB per-repo cache limit is easy to hit.

Namespace Cache Volumes are different. They are persistent volumes mounted directly to the runner via NVMe-backed local storage. There are no upload/download steps. The cache is just there, instantly readable and writable, with support for up to hundreds of GB.

Enabling Cache Volumes

Go to your runner profile in the Namespace dashboard and enable caching. Set a minimum size of 20 GB to start. Then use nscloud-cache-action to mount the volume in your workflow.

Framework-Specific Caching

nscloud-cache-action has built-in support for popular ecosystems. You specify what you want cached by name:

jobs:

build:

runs-on: namespace-profile-my-fast-runner

steps:

- uses: namespacelabs/nscloud-checkout-action@v8

# Cache Go modules and build cache

- name: Setup Go cache

uses: namespacelabs/nscloud-cache-action@v1

with:

cache: go

- uses: actions/setup-go@v6

with:

go-version: "1.22"

cache: false # Disable GitHub's built-in cache and use Namespace instead

- run: go build ./...

- run: go test ./...Multi-Framework Caching

You can cache multiple ecosystems in a single step:

jobs:

full-stack-test:

runs-on: namespace-profile-my-fast-runner

steps:

- uses: namespacelabs/nscloud-checkout-action@v8

- name: Setup all caches

uses: namespacelabs/nscloud-cache-action@v1

with:

cache: |

go

node

rust

python

- uses: actions/setup-go@v6

with:

go-version: "1.22"

cache: false

- uses: actions/setup-node@v6

with:

node-version: "20"

cache: "" # Disable GitHub's npm cache

- run: npm ci

- run: go build ./...Caching Arbitrary Paths

For tools or directories not covered by built-in presets, use the path option:

steps:

- name: Cache Playwright browsers

uses: namespacelabs/nscloud-cache-action@v1

with:

path: |

~/.cache/ms-playwright

./.cache/custom-tool

- name: Install Playwright

run: npx playwright install --with-depsToolchain Caching (setup-* actions)

When you enable Toolchain downloads in your profile config, actions/setup-go, actions/setup-python, actions/setup-node, and similar actions automatically store their downloads on the Cache Volume. No workflow changes needed, just disable their built-in caching flags so they don't double-cache:

steps:

- uses: actions/setup-python@v6

with:

python-version: "3.12"

cache: "" # Let Namespace handle this

- uses: actions/setup-node@v6

with:

node-version: "20"

cache: ""Cache Branch Isolation

By default, the cache volume is shared across all branches of a repository. You can restrict which branches may write to the cache (e.g., only main) while still allowing all branches to read from it. This prevents cache poisoning from untrusted PRs while preserving fast reads:

# For jobs that should NOT update the cache (e.g., PRs from forks)

jobs:

test:

runs-on:

- nscloud-ubuntu-22.04-amd64-8x16-with-cache

- nscloud-cache-tag-my-app-cache

- nscloud-cache-size-50gb

- nscloud-cache-exp-do-not-commit # Read cache but don't persist changesPutting It All Together

Here's a complete workflow, for a typical Go service, combining all three techniques: fast runners, cached checkout, and Cache Volumes.

name: CI

on:

push:

branches: [main]

pull_request:

jobs:

test:

runs-on: namespace-profile-go-service-ci

steps:

- name: Checkout (cached)

uses: namespacelabs/nscloud-checkout-action@v8

- name: Setup dependency caches

uses: namespacelabs/nscloud-cache-action@v1

with:

cache: go

- name: Setup Go

uses: actions/setup-go@v6

with:

go-version: "1.22"

cache: false # Namespace handles this

- name: Build

run: go build ./...

- name: Test

run: go test -race ./...

lint:

runs-on: nscloud-ubuntu-22.04-amd64-4x8 # Smaller shape for linting

steps:

- uses: namespacelabs/nscloud-checkout-action@v8

- uses: namespacelabs/nscloud-cache-action@v1

with:

cache: go

- uses: actions/setup-go@v6

with:

go-version: "1.22"

cache: false

- name: Run golangci-lint

uses: golangci/golangci-lint-action@v6

with:

version: latestSummary

Each of these optimizations is independent, so you can adopt them incrementally. Start by swapping the runner label. That single change typically cuts build times by 30–60% through better CPU alone. Then add nscloud-checkout-action to eliminate full clones on every run, saving 1–3 minutes per job on larger repos. Finally, layer in Cache Volumes to replace GitHub's tarball-based caching with direct NVMe-mounted storage, cutting another 2–5 minutes per job.

Used together, all three can take a sluggish 20-minute pipeline down to under 5.